Overview

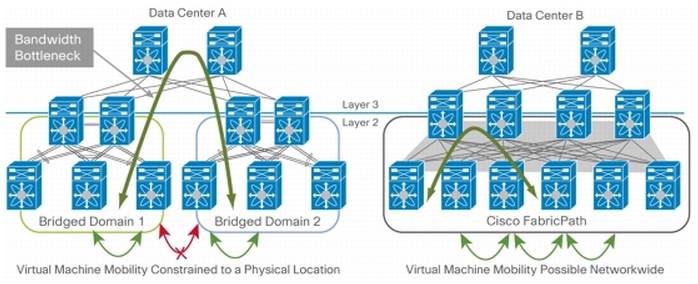

FabricPatch is designed to resolve the shortcomings of the traditional Layer 2 design. Today, Datacenters need to find a compromise between the flexibility offered by layer 2 technologies and scalability offered by layer 3 technologies.

Typically Layer 2 is computed by STP because it needs to be loop free, this is accomplished by blocking some ports and the result is a loss of bandwidth. Non loop free Layer 2 domain leads to disaster sooner or later, an issue at one point on a Layer 2 domain will affect the entire Layer 2 domain. Additionally, large Layer 2 domain are more difficult to troubleshoot. For these reasons, Layer 2 is often confined to small islands that are interconnected through Layer 3.

Layer 2 Fabrics with Cisco FabricPath

Today trend is to move to virtualization and consolidation that allow multiple servers to be merged into one. This leads to an increased usage of the resources and by so, network resources.

FabricPath can deliver the fundamentals for a scalable fabric with a layer 2 domain that see itself like a big giant switch. This provides optimal delivery of layer 2 frames and optimal usage of the bandwidth between layer two ports whatever where they are located in the network. STP is not used inside a FabricPath topolopgy.

FabricPath Routes

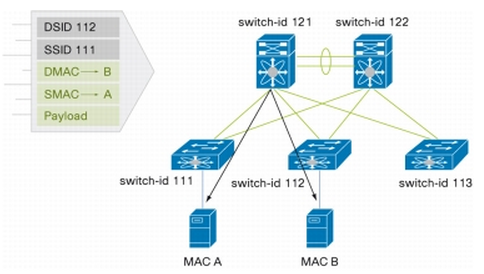

Classical Ethernet rules does not apply in a FabricPath topology. An Ethernet frame will be encapsulated inside the FabricPath header which is composed of routable source/destination addresses. These addresses represent the physical switch where the frame was received and the physical switch where the frame is destined.

The encapsulated frame is routed at Layer 2 by FabricPath until it reaches the destination switch where the de-encapsulation occurs and then delivered.

Switch addresses are automatically assigned and the routing table is computed for all unicast and multicast destination.

FabricPath Support

FabricPath is supported only on dedicated hardware :

- Nexus 7000 F1 and F2 Module (F-Series Modules).

- Nexus 5500 Series are able to run FabricPath.

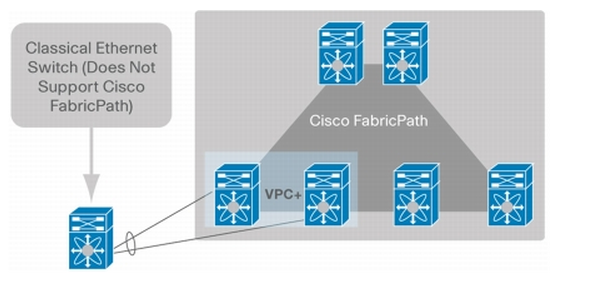

These platforms can concurrently run Layer 2 technologies like FabricPath and Layer 3 Technologies. Devices that do not support FabricPath can still be redundantly connected to two separate FabricPatch switches using vPC+.

FabricPath uses ISIS for the routing part of the process, there is no need to know ISIS to configure FabricPath. Other option are enabled with FabricPath such as RPF and TTLing.

One big benefits is that FabricPath enables ECMP at Layer 2 with a current maximum of 16 ways and the latency is reduced by using the shortest path to the destination.

General Use

There is no need to configure Port-Channels and the bandwidth usage within the layer 2 domain is now constrained between Access and Distribution switches which can go up to 16 ways with ECMP. The availability is kept if links are down.

The 16 way ECMP can be avoided by using logical ports. So Port-channel can be used and will count as 1 way.

Technical Overview

Prerequisites and restrictions

- The FabricPath feature must be enabled on default and non-default VDC

- The Enhanced Layer 2 licence is required

- On Nexus 7000, FabricPath must be enable on F series module

- FabricPath interfaces can only carry FabricPath traffic

- STP does not run inside a FabricPath topology

- Specific restriction on PVLANs

- All VLANs in a private VLAN must be in the same VLAN mode (CE or FP). If different types of VLAN are inside the same private VLAN, they will not be active

- FabricPath ports cannot be put into a private VLAN

- There is no support of hierarchical static MAC addresses or static routes

- On F series module, user-configured static MAC addresses are programmed on all forwarding engines that have ports in that VLAN

- FabricPath is supported only on

Terminology

Leaf Devices

Are devices at the Edge of the FabricPath topology. Leaf Devices connect both standard Ethernet Port and FabricPath port. Leaf devices are able to directly map MAC addresses to switch ports.

Spine Devices

Are devices at the core of the FabricPath topology. Spine Devices only connect to FabricPath topology. These devices only forward traffic based on the Switch ID.

Switch-ID

Is a value that represent the switch inside a FabricPath topology. By default, Switch-IDs are assigned via DRAP protocol but they can be manually assigned. If a manual configuration is performed, a check is done to prevent conflicts. Additionally if two FabricPath topology merge and a conflict is found, the conflict will be resolved by stalling the interfaces. Switch-IDs are used for routing the frames.

Conversational Learning

Is the way of populating MAC address tables with FabricPath. Edge switches learn MAC addresses only if they have a device connected on the edge port or if the switch is a remote MAC and is in an active conversation with a known MAC connected to an edge port. Traffic flood from a remote switch will not populate the MAC table of an edge switch. Spine switch will only forward traffic based on the Switch ID.

VLAN Trunking

Is not necessary with FabricPath. VLANs must still be defined but are not needed to be specified out of a trunk.

Classic Ethernet (CE)

Is the normal Ethernet operations including STP, MAC learning and so on. CE operations will be performed by Leaf Devices that make the link between CE and FabricPath.

Metrics

Are the value used by FabricPath to compute the best SPF path to the destination. The cost is similar to how link state protocol build their SPF. The metrics can be seen with # show fabricpath isis interface brief

- 1 Gbps have a cost of 400

- 10 Gbps have a cost of 40

- 20 Gbps have a cost of 2

Multipath Load Balancing

Is basically ECMP. FabricPath is able to use multiple uplinks to forward traffic thus increasing the bandwidth availability. FabricPath routes can be seen using # show fabricpath route

Multi-destination Trees

Are built for Multicast, Broadcast and Flooded traffic. FabricPath allows multiple multi-destination trees to be built for load balancing multi-destination frames. The FTag present in the FabricPath header is used to this. The FTag and the type of frame destination address (flooded, broadcast or unicast) selects a Tree which is composed of the possible switches and interfaces where the frame is forwarded. At each hop, the FTag is used to forward the frame on interface in the Tree.

On the diagram, FTag1 is used for the multi-destination tree number 1 and FTag 2 is used for the multi-destination tree number 2.

- FTag1 is used for flooded unknown unicast, broadcast and multicast because the Switch121 is the highest priority switch

- FTag2 is used only for multicast traffic

Priority is defined as follow :

- Highest root priority

- If tie, highest system-ID

- If tie, highest switch-ID

Multicast Forwarding

FTag and tree pruning build by IGMP are used to forward multicast traffic. Edge (leaf devices) are performing IGMP snooping and member reports are carried on FabricPath with Group MemberShip Link State Protocol (GM-LSP). ISIS and IGMP snooping will work together to build per VLAN multicast group-based trees.

vPC+

Devices not suppoorting FabricPath can still be attached to devices that support it (on Edge/Leaf devices) by using vPC+. The device will be connected to two Edge/Leaf devices by using a single port channel by using an emulated switch. This way two FabricPath switches will emulate a single switch to the rest of the FabricPath network. Packets send by the emulated switch are sourced with an emulated switch-ID. The other switches of the FabricPath topology only see the emulated switch-ID.

The devices needs to be connected like any vPC devices with a peer link and a peek keepalive. The peer link is a regular FabricPath link and can be used by orphan ports for direct communication.

Leave a Reply