Overview of DC design

- Core layer : high speed packet switching for flow going in and out of the DC

- Aggregation layer: Service and module integration, layer 2 domain definition, STP processing, FHRP and other critical functions

- Access layer: Physical connection for end hosts

The access layer can support layer2 or 3. Aggregation layer supports modules that provides services like security, load balancing, content switching, SSL offload, IDS and IPS. . .

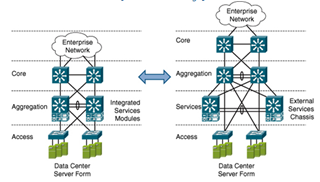

Large DC have three tiered design but Smaller DC can only have two tiered design.

Three layer model benefits

Layer two domain sizing: if a VLAN needs to be extended across multiple switches the sizing occurs at the aggregation layer. If access layer does not exist, the core need to extend them. Using the core will lead to STP blocking and broadcast control issues

Service module support; aggregation layer can be used to provide reducing the number of components shared services across all access switches this lowers the TCO and can complexity by lowering the number of components

Support for NIC teaming and HA clustering: This suppose layer two adjacency between NICs

VLAN extension through the core is not recommended but can be necessary. Such designs introduce the risk of globate failures. OTV Should be looked into if such design is needed

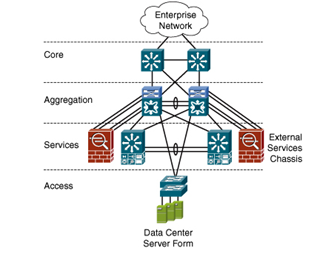

Service layer

Services can be integrated inside the aggregation layer such as:

- load balancing

- security features

Services caw be integrated using services modules. This allows for rah space saving is less cooling requirements and cabling. Alternative approaches is to a separate service layer. Using another pair of 6500 will free the ports that then can be used for tradition access usage. If new services are required or is expansion is needed, dedicated modules can be used on the dedicated 6500.

Nexus 7k do wok Support services modules

Dedicated services appliances

Another alternative is to use separate services modules appliances. Services will be offered by standalone devices connected to the aggregation layer.

The design choice is done with the following consideration:

- Power and rack space

- Performance and throughput : Standalone appliances can have best perfs

- Fault tolerance

- Required services: Some services caw be supported on an appliance but not cava service module. VPN is supported on ASA but not in the service module

Using one or the others design is not exclusive and can be combined.

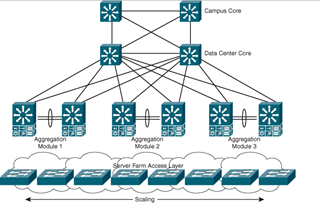

Data center core design

The main goal of the DC core is to aggregate all the aggregation switches. At DC core is not always needed but is recommended if multiple module exists. The DC cone also has the function of interconnecting the datacenter with the Campus core.

Design consideration

- 10GE port density: A single pain of core switches may not be sufficient to support the required density

- Domains and policies: Separate Campus (one and DC core helps isolation to implement QoS, ACL, troubleshooting and maintenance

- Growth: The impact of implementing a DC core after the initial deployment can be huge

The DC core acts as a default gateway to the DC applications to the Campus Core.

Layer 3 characteristics

Most of the time the layer 213 boundaries is located on the aggregation switches, Aggregation to cone harks are lager 3. This allows for STP avoidance, bandwidth scalability and fast convergence. The core should now OSPF or EIGRP and load balance traffic between Campus core and Core aggregation using CEF hash. At least two equal cost routes to the server subnets must be present

By default CEF load balancing is done using layer 3 source and destination, layer 4 information can be added to the hashing.

OSPF IGP Recommendations

Two mains suggestions when using OSPF in the Datacenter :

- Use NSSA from the DC Core. NSSA will limit the LSA propagation and allows for route redistribution. Default route can be advertised to the aggregation layers and summarization can be done for routes going out.

- Change the reference cost to 10000 to reflect the new 10GE capacities. By default with NX-OS the reference bandwidth is 40000.

EIGRP IGP Recommendations

Four recommendation for EIGRP in the Datacenter :

- Advertise a default summary route to the Datacenter Access layer using ip summary-address eigrp at the aggregation layer

- If other route exists, filter them with distribute lists

- Summarize Datacenter Access subnets from the aggregation layer

- Use passive-interface default to only activate the participating interfaces

Aggregation Layer Design

Scalability in the Aggregation Layer

The Aggregation Layer consists other multiple pairs of interconnected switches. One pair is called a module.

- STP Scaling : Using multiple aggregation module limits the size of the Layer 2 domain and constraint the failure to one module.

- Access Layer density scaling : Bandwidth needs tends to grow at the access layer and uplinks to the aggregation layer tends to migrate to 10GE. The current maximum number of 10GE that can be placed at the aggregation is 64 with the WS-X6708-10G-3C in a 6509. Using multiple modules allows to provide a higher 10GE port density.

- HSRP scaling : Using two aggregation switch allows for gateway redundancy mechanisms. HSRP is the most used. The recommendation is to limit the number of HSRP instance to 500 maximum with a hello time at 1 second and hold time to 3 second.

- Application services scaling : The aggregation layer supports applications of service modules. Services modules can be deployed in virtual contexts each context behaving like an independent devices with its own policies.

STP design

The two most STP mode that are used inside the datacenter are MST and RSTP.

RSTP is recommended over MST for the following reasons :

- RSTP has rapid convergence and already includes enhancement of PVST such as UplinkFast and BackboneFast. RSTP and MST are valid choices.

- MST and RSTP use the same algorithms for convergence.

- Access Layer uplink failure are detected within 300ms to 2s depending the number of VLANs.

- RSTP and MST should be combined with Root Guard, BPDU Guard, Loop Guard, Bridge Assurance and UDLD to achieve stable, secure and predictable STP environment.

- MST required consistent configuration to avoid regionalization.

Bridge Assurance is preferred over Loop Guard but they should not be enabled at the same time.

Globally :

- RSTP is easier to configure, deploy and maintain

- MST is IEE standard, RSTP is proprietary

- MST is more efficient than RSTP with the way it deals with BPDU. MST does not depend on the number of VLAN. RSTP does.

- MST BPDU cannot be bridged, if appliance of service modules are deployed RSTP is recommended because RSTP BPDU can be bridged.

Integrated Services Modules

Services Modules can provides services like Content Switching, Firewalling, SSL Offload, IDS and others…

For redundancy, Modules can be deployed in two different ways :

Active/Standby Pair

This model is the most used because it offers the most predictable behavior. If the service module require layer 2 adjacency with the server, this model is the model of choice.

The following modules supports this design :

- CSM

- FSWM 2.x

- SSL

The disadvantages of this model is the underutilization of access layer uplinks and the underutilization of the services modules and switch fabrics. This model uses the HSRP/STP/Active service alignment.

Active/Active

Most of the time this model is deployed through the use of contexts :

Newer service module now supports this like CSM and FSWM 3.1 where multiple contexts can be deployed and be active or standby independently from each other. With this model the alignment must be done per-VLAN per-context.

Inbound Path Preference

When a clients addresses a request to a virtual IP of a virtual server, CSM chooses a real physical server from a pool in the server farm. This choice is made based on configured load-balancing algorithms and policies such as access-rules.

RHI (Route Health Injection) allows a Cisco CSM or ACE module in a Cisco 6500 to install a host route if the virtual server is operational. This establishes a path preference with the Campus Core so that all sessions to a specific VIP go to the aggregation switch where the primary service is active

Nexus 7000 at the aggregation layer

The Nexus 7000 series offers two important new features :

VDC

The Virtual Device Context allows to divide a single chassis into multiple virtual switches. Each VDC operates in standalone mode with its own configuration files, physical ports, separate control and management plane. A pair of Nexus 7000K can be used to replace 4 Cisco 6500 and merge Aggregation and Core with only two chassis.

Traffic from one VDC to another VDC can not be forwarded inside the chassis. To have interconnection between two VDCs, the data must first exit the VDC through a physical port and re-enter the chassis to another port or the second VDC. The exception is the default VDC which can manage other VDCs.

As the default VDC own the critical operations like CoPP, VDC resources allocation or reloads operations, no Layer 3 interface of the default VDC should be exposed to production.

VDC allows for the following design :

- Split-core topology : VDCs can be used to build two separated and redundant DC Core with only one pair of Nexus 7000. This can be useful for migration or merging operations.

- Multiple Aggregation blocks : One Aggregation block consists of a pair of switches. If multiple aggregation blocks are needed, VDCs can be considered to deployed multiple aggregation blocks without the need of physical switches.

- Service Sandwich : VRFs can be used to create a Layer 3 hop that separates servers from the services of the service chain at the aggregation layer. This approach is called VRF sandwich because the design consist of two VRFs with the services chain in between. VDCs can replace VRFs, this adds management plane separation.

The following consideration but be taken into account when using VDCs :

- Always use pairs of 7K when using VDCs. The failure of one chassis containing all VDCs will result in complete outage

- Control and management plane of different VDCs are separated but resources of the physical chassis are shared. CPU and Memory assignment can be done to avoid the depletion of resources that would affect the entire box.

- Review CoPP policies and rate limits as they are applied collectively from the default VDC to all VDCs because only one logical in-band control plane interface exists.

- TCAM of a line card is shared across all VDCs that own physical ports on it. Allocate an entire line card to a VDC is preferred over distributing ports on one line card over multiple VDCs. Also, allocate port to VDC using the line card port group is preferred even if it’s not a requirement of the line card.

- Using VDC instead of VRF will typically require more interfaces. VDC does not share interfaces between VDCs.

vPC

vPC allows to build loop free access/aggregation topology where no link is blocked and can all be used. vPC enables Layer 2 MEC where one port channel is able to connect to two different chassis.

vPC best practices :

- Two Cisco Nexus 7k need to be attached through redundant 10GE links from separate 10GE line card to form the peer link.

- Dual attach all access switches to the vPC peers. A switch or host not connected to the two vPC peer would consume bandwidth on the peer link and if the vPC peers where it’s attached is lost, it may be isolated from the network.

- If dual attachement is not possible, implement a separate trunk between the vPC peers to carry the non-vPC VLANs.

- One vPC VLANs should be carried across the vPC peer link. If vPC and non vPC VLANs have to share the peer link, use the dual-active exclude interface-vlan command to decouple SVI status from the peer link failure.

- Do not route the peer keepalive over the peer link. To ensure system resiliency they must use a different path. To form or recover a peer link, a peer keepalive must already be connected.

- Use separate VRF and front-panel ports for the peer-keepalive link. An alternative is to use OOB management interface. Be careful with dual supervisor as only one management port is active at a time.

vPC enables two types of designs :

- Single sided vPC : this design is used when access switches does not supports vPC. A standard Etherchannel is used and connect to the two upstream vPC peers. In a standard Etherchannel configuration a maximum of 8 members can be present in the bundle.

- Double sided vPC : Both access and aggregation supports vPC. In this case vPC will be connected back to back and up to 16 members can be bundle into one channel.

Access Layer Design

Overview of the Access Layer

The main goal of the access layer is to provide physical attachment for the end hosts and can operates in Layer 3 or Layer 2 modes. The access layer is the first oversubscrition point of the Datacenter. Three main model exists :

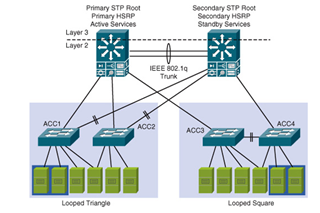

Layer 2 Looped

In this design, VLANs are extended into the aggregation layer. Layer 2 services like Teaming, Clustering and other services like Firewall, SLB can be provided across a Layer 2 model. Layer 3 is performed at the aggregation level.

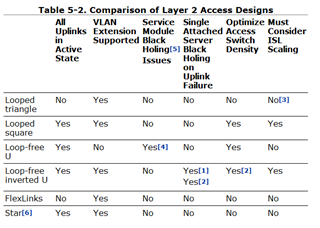

Two topologies can be used for Layer 2 looped design : Loop triangle and Loop square.

The looped triangle is the most implemented design in the enterprise data center. It provides deterministic design when STP, HSRP and active services module are aligned on the same aggregation switch. One uplink to the aggregation layer is blocked, wasting 50% of the potential bandwidth.

The looped square provides the same deterministic behavior as the looped triangle. Here, STP blocks the trunk that is created between the two access switches increasing the available bandwidth to the aggregation layer. Active/active uplink align well to active/active service module designs.

Main issues occurs at Layer 2 because it can span across the entire layer 2 domain and the network can go down. RSTP is recommended on these design and additional loop-prevention feature should be used like BPDU guard, Root guard, UDLD and so on.

Layer 2 Loop Free

Even with loop free design, STP is still needed as a loop-prevention tool in the event of a wrong cabling. Loop free design have the following attributes :

- All active uplinks : No uplinks are blocked.

- Layer 2 server adjacency : With a loop free U design across the access switches, and with an inverted loop free U design across the aggregation switches.

- Stability : Less chances of loop due to misconfiguration

Today, VSS and vPC allows loop free designs based on MEC. These technologies are preferred if they’re available.

Loop free U topologies allows :

- VLAN containment into access switches pairs

- No STP blocking

- Layer 2 service modules black hole traffic on uplink failure. In cases of Uplink failure, active service module can be reached via the second aggregation switch

To overcome the black holing issues, active/standby contexts can be deployed. For example, the ACE service module supports context that can fail over if uplinks are lost on the primary aggregation switch. If contexts are not supports by the service module, that entire service needs to switch over, which is not a desirable solution.

Loop free inverted U allows :

- VLAN extension

- No STP blocking

- Uplink failure only black hole directly attached servers

- Supports all service module implementation

Layer 3

Layer 3 can be configured at the access layer. Each access switch layer will then need to be connected to the aggregation switches through a dedicated IP subnet and layer 3 routing is performed on the access switch. This design will constrain Layer 2 domain and disable the possibility of VLAN extension.

ECMP can be used for multi-pathing up to 8 paths. Layer 3 will also provide better convergence time than Layer 2.

Layer 3 access offers the following benefits :

- Broadcast and failure domain are reduced.

- Server stability requirement are supported including traffic isolation such as multicast traffic

- All uplinks are available and up to 8 paths can be used with ECMP

- Fast convergence

Main drawbacks are IP space management and the limitation of layer 2 adjacency to an access switch pair

Leave a Reply